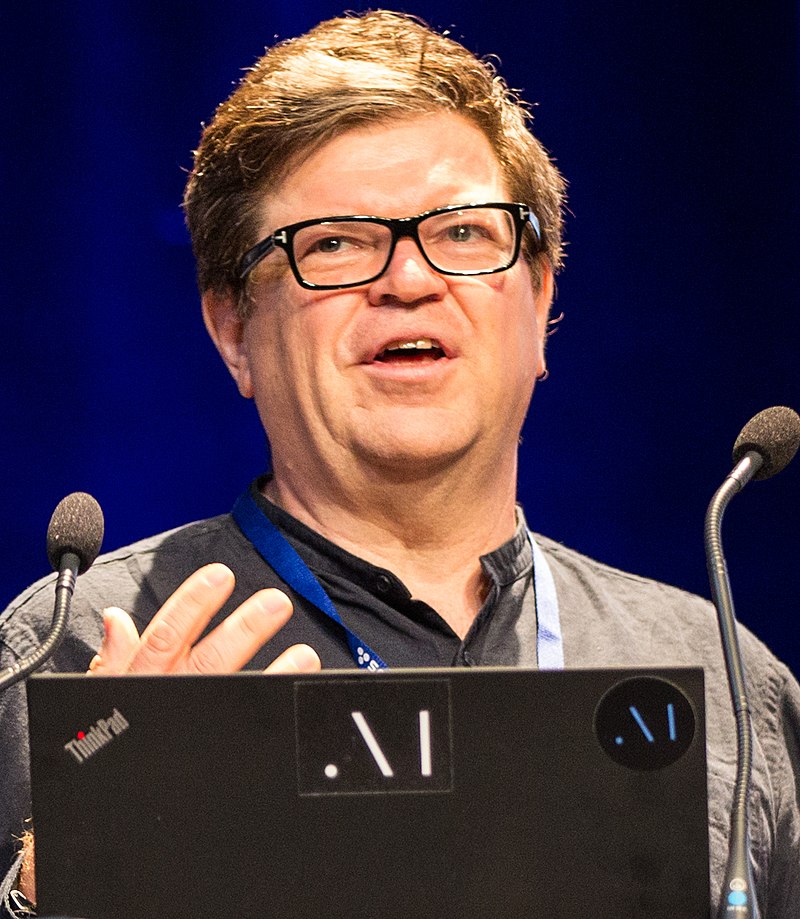

In a thought-provoking podcast conversation, Yann LeCun, renowned AI scientist and Chief AI Scientist at Meta, sits down with Lex Fridman to discuss the current state and future of artificial intelligence (AI).

Introduction

This discussion touches on critical aspects like the limitations of large language models (LLMs), the promise of open-source AI, and the essential components required to develop truly intelligent systems. Below is a deep dive into their conversation, presented with a modern format and enriched by their insights.

Key Takeaways

- Limitations of LLMs: Yann LeCun argues that LLMs lack the ability to reason, plan, and understand the physical world.

- JEPA & Future AI: The introduction of JEPA presents a step forward in developing AI systems capable of abstract reasoning and self-supervised learning.

- Open Source AI: LeCun advocates for open-source AI to foster innovation, diversity, and decentralized control.

- AI Safety & Bias: Bias is inherent in AI, but diversity and openness are key to ensuring fairness.

- AI and Humanoid Robots: The future of robotics lies in developing systems that can handle complex, real-world tasks autonomously.

The Centralization of AI Power

Yann LeCun begins by expressing concerns about the concentration of AI power in a few large companies. While he acknowledges the importance of innovation, LeCun advocates for open-source AI models to decentralize control and empower developers globally. By democratizing access, AI’s potential can be harnessed in a more diverse and inclusive manner, allowing for global innovation across different cultural and social spectrums. Lex Fridman shares LeCun’s optimism, stating that people, in general, have good intentions and that open-source AI can enhance human potential by broadening access to intelligent systems.

The Limitations of Large Language Models (LLMs)

LeCun emphasizes that current LLMs, like GPT-4 and Meta’s LLaMA, while impressive in their scope, are not the future of intelligence. He points out that these models:

– Rely heavily on text-based training data.

– Lack understanding of the physical world,

– Fail to perform reasoning and planning beyond predicting text.

He contrasts human learning, which involves extensive sensory inputs from the world, with LLMs that solely digest written information. Human intelligence, LeCun argues, is grounded in real-world experiences and continuous interactions with our environment—something current AI models struggle with.

Language vs. Grounded Intelligence

Lex Fridman proposes an alternative view, suggesting that language contains latent knowledge about the world. Could it be possible to build a world model from language alone? LeCun refutes this, stressing that true intelligence must be grounded in reality. For AI systems to approach human-like intelligence, they need more than just language; they must understand physical laws and intuitive physics, gleaned from real-world or simulated environments.

Autoregressive LLMs: A Structural Problem

LeCun explains that LLMs operate in an autoregressive manner, predicting the next word based on the previous sequence of words. This, however, is fundamentally different from how humans think. Humans often plan and reason at an abstract level before formulating thoughts into words. This crucial gap prevents LLMs from genuinely understanding or modeling the world, limiting their ability to perform complex tasks that require planning or multi-step reasoning.

The Path Forward: Joint Embedding Predictive Architecture (JEPA)

Introducing a novel concept, LeCun explains JEPA (Joint Embedding Predictive Architecture), a promising new approach that builds abstract representations of the world through predictive learning. Rather than predicting every fine detail (as in LLMs), JEPA focuses on understanding the most relevant information—eliminating noise and highlighting what matters.

LeCun describes JEPA as a significant step forward in developing intelligent systems that can learn more efficiently from visual and sensory data. Unlike LLMs, which predict text one token at a time, JEPA can work on larger scales of representation and prediction.

Future of AI: Beyond Autoregressive Models

LeCun envisions a future where AI transcends the limitations of autoregressive models like LLMs. To achieve Artificial General Intelligence (AGI), systems need to move toward more adaptive models that can reason, plan, and understand complex environments. JEPA, for example, enables self-supervised learning and encourages abstraction—both of which are critical components for truly intelligent systems.

LeCun stresses that AI must eventually develop world models that allow it to predict, plan, and act based on sensory input, similar to how humans interact with the physical world.

Non-Contrastive Learning & Vision Models

In the conversation, LeCun introduces advancements in non-contrastive learning techniques, particularly for visual and video data. He explains that models like DINO and I-JEPA are trained to predict masked portions of images, allowing the AI to grasp the essence of visual content without being bogged down by irrelevant details. This has profound implications for developing AI capable of reasoning about dynamic environments, such as self-driving cars or robotic systems.

Limitations of Hierarchical Planning in AI

Fridman raises the concept of hierarchical planning, where tasks are broken down into smaller, manageable goals—like planning a trip from New York to Paris. LeCun points out that while humans can easily decompose tasks, current AI architectures struggle to do so. Hierarchical planning requires multiple layers of abstraction, something still not fully realized in modern AI systems.

AI Safety & Open Source Platforms

A key theme of the conversation revolves around AI safety and the role of open-source systems in mitigating bias. LeCun stresses that bias is unavoidable in AI. The solution lies in diversity—having multiple AI models tailored to different cultures, languages, and worldviews. He highlights the role of open-source AI in fostering such diversity, allowing communities around the world to adapt AI technologies to their unique contexts.

By opening up AI development, companies and governments can create models that reflect local values and ethical standards. LeCun also notes that open-source AI ensures that no single company monopolizes control over AI technologies, fostering a competitive ecosystem that benefits everyone.

Hallucinations in LLMs

The pair also address the hallucinations commonly seen in LLMs—where AI produces incorrect or nonsensical answers. LeCun explains that this occurs due to the cumulative error in autoregressive models, where small inaccuracies snowball into larger mistakes. The vast number of possible prompts means that LLMs will inevitably fail on certain inputs, no matter how large their training data.

Energy-Based Models: The Future of Reasoning AI

LeCun introduces a compelling concept called energy-based models, which could revolutionize the way AI handles reasoning and decision-making. Instead of predicting word-by-word responses (as in autoregressive models), energy-based models would operate in a continuous space, searching for the most optimal solutions before outputting text. This could lead to AI systems that reason more efficiently, especially when dealing with complex tasks.

Addressing AI “Doom”

Fridman and LeCun also tackle the idea of AI doom—the fear that AI systems will become uncontrollable and dominate humanity. LeCun dismisses this scenario, arguing that intelligence doesn’t naturally lead to domination. Future AI systems, according to LeCun, will continue to evolve incrementally, ensuring that safeguards and checks remain in place as the technology develops.

The Role of Humanoid Robots

Touching on the future of robotics, LeCun envisions humanoid robots performing household tasks like cooking and cleaning. However, he emphasizes that these systems will need to develop sophisticated planning and world-modeling capabilities to function autonomously in dynamic environments. While robotics has made strides, there is still much work to be done in creating AI systems that can understand and interact with the physical world at a human level.

Conclusion: AI as a Force for Good

As the conversation wraps up, Yann LeCun remains optimistic about AI’s potential. He envisions a future where AI enhances human capabilities, providing everyone with intelligent assistants that can solve problems, learn from experiences, and plan for the future. Comparing this AI revolution to the transformative effect of the printing press, LeCun believes AI will empower society to solve some of the world’s biggest challenges.